24 Aug 2020

.

research

.

Two positions on MEMEX Project (Post Doc and Engineer)

Comments

We have two (exciting) positions to join us on the MEMEX EU Project! One engineer to coordinate with the project consortium to develop an innovative app exposing Cultural Heritage and project participant stories. The second is a post-doc to research and develop algorithms for 3D Scene Understanding! These are two very exciting positions to work with us and create impactful tools and research.

About the project…

The MEMEX project promotes social cohesion through collaborative, heritage-related tools that provide inclusive access to tangible and intangible cultural heritage and, at the same time, facilitate encounters, discussions and interactions between communities at risk of social exclusion. These tools will empower communities of people with the possibility of welding together their fragmented experiences and memories into compelling and geolocalised storylines using new personalised digital content linked to the pre-existent European Cultural Heritage (CH). The tools of MEMEX will allow the communities to tell their stories and to claim their rights and equal participation in the European society. To this end, MEMEX will nurture actions that contribute to, rather than undermine, practices of recognition of differences by giving voice to individuals for promoting cultural diversity.

Project Site: https://memexproject.eu/

Post Doc

The research topics on 3D scene understanding are related to the recent PAVIS achievements on Spatial AI related to 3D semantic mapping, object 3D localization, graph neural networks (GNNs) for scene modelling and active visual reasoning by integrating deep learning models with multi-view geometry approaches. Such methods will leverage massive worldwide geolocalised data as provided by project partners to finally deploy a mobile app that understands, localizes and reason about the semantic elements present in a generic scene.

Engineer

The candidate will have the opportunity to work on realistic problems involving leading European research centers and companies with the aim to create a novel AR platform able to understand the semantic scene structure of the environment using an RGB video stream from a smartphone.

Application Details

To apply, follow the instructions indicated at the following links:

Engineer Position: https://iit.taleo.net/careersection/ex/jobdetail.ftl?lang=en&job=2000002M

Post Doc Position: https://iit.taleo.net/careersection/ex/jobdetail.ftl?lang=en&job=2000002K

18 May 2020

.

research

.

New PhD Position Available in Visual Reasoning!

Comments

Exciting news, for me and possibly for you! This post marks my first PhD call where I will be leading the research direction of the successful candidate in collaboration with Alessio Del Bue (IIT) and Sebastiano Vascon (Ca’ Foscari University of Venice). So, we are looking for someone with interest in pursuing a PhD in Visual Reasoning to join the Pattern Analysis and Computer Vision Department of the Italian Institute of Technology (IIT). The University of Genova are the awarding body of this PhD, so please be careful of the details which can be found on the University of Genova website.

Theme F: Visual Reasoning with Knowledge and Graph Neural Networks for scene understanding

Machine ability to detect objects within images has surpassed human ability, however, when posed with relatively simple more complex tasks machines quickly struggle. This theme focuses on developing AI systems that are able to access knowledge stored in Knowledge Graphs to understand the world around the camera view. Few works have successfully integrated knowledge in Computer Vision systems and knowledge graphs provide one avenue. This research will study methods to integrate knowledge for user interaction via retrieval or visual question and answering within real world environments. In particular, shallow and deep graph-based methodologies are promising computational framework to include external knowledge and also maintaining a high degree of interpretability, a necessary feature for modern AI systems.

** There are other themes check them out to work in our department **

Application Details

To apply, follow the instructions indicated at the following links where the notice of public examination in Italian and in English are published:

https://unige.it/en/usg/en/phd-programmes

https://unige.it/en/students/phd-programmes

In short, the documentation to be submitted is a detailed CV, a research proposal under one or more themes chosen among Theme K or the other themes (please, see also a project proposal template at the link indicated below), reference letters, and any other formal document concerning the degrees earned.

Please note that these documents are mandatory in order to consider valid the application.

IMPORTANT: In order to apply, candidates must prepare a research proposal based on the research topics above mentioned.

Please, follow these indications to prepare it:

https://pavisdata.iit.it/data/phd/ResearchProjectTemplate.pdf

ONLINE APPLICATION DEADLINE is June 15th, 2020 at 12:00 p.m. (noon, Italian time/CEST)

STRICT DEADLINE, NO EXTENSION.

Additional Links:

Disclaimer

Please note your in-name supervisor will be Dr. Alessio Del Bue who leads the Pattern Analysis and Computer Vision department, however, your research activities will be led by myself. The Italian application process applies to a call, not a tutor/supervisor, where supervisor are selected based on the most suitable.

10 Jan 2020

.

research

.

And it begins... MEMEX

Comments

Today we had our Kick-off Meeting for the MEMEX EU Project here is the abstract:

MEMEX promotes social cohesion through collaborative, heritage-related storytelling tools that provide access to tangible and intangible Cultural Heritage (CH) for communities at risk of exclusion. The project implements new actions for social science to: understand the NEEDS of such communities and co-design interfaces to suit their needs; DEVELOP the audience through participation strategies; while increasing the INCLUSION of communities. The fruition of this will be achieved through ground breaking ICT tools that provide a new paradigm for interaction with CH for all end user. MEMEX will create new assisted Augmented Reality (AR) experiences in the form of stories that intertwine the memories (expressed as videos, images or text) of the participating communities with the physical places / objects that surround them. To reach these objectives, MEMEX develop techniques to (semi-)automatically link images to their LOCATION and connect to a new opensource Knowledge Graph (KG). The KG will facilitate assisted storytelling by means of clustering that links consistently user data and CH assets in the KG. Finally, stories will be visualised onto smartphones by AR on top of the real world allowing to TELL an engaging narrative. MEMEX will be deployed and demonstrated on three pilots with unique communities. First, Barcelona’s Migrant Women, which raises the gender question around their inclusion in CH, giving them a voice to valorise their memories. Secondly, MEMEX will give access to the inhabitants of Paris’s XIX district, one of the largest immigrant settlements of Paris, to digital heritage repositories of over 1 million items to develop co-authored new history and memories connected to the artistic history of the district. Finally, first, second and third generation Portuguese migrants living in Lisbon will provide insights on how technology tools can enrich the lives of the participants.

More information coming soon at www.memexproject.eu or on EU Cordis https://cordis.europa.eu/project/id/870743

25 Sep 2019

.

research

.

Review in a week*

Comments

For a long time now, I have been reviewing articles at a variety of journals and conferences, which is “usually” tracked within publons. While I try hard to make sure that I get reviews completed before the deadline often, when it comes to Journals, this is accomplished in the final few days before the deadline. This creates a significant stress and anxiety to my academic life, as I’m constantly worrying about the tasks that I have to do, and in some cases staying up late to get the review completed. Therefore, as I review my activities, I consider how I can improve the way I review, and therefore I propose (not originally) review in a week*.

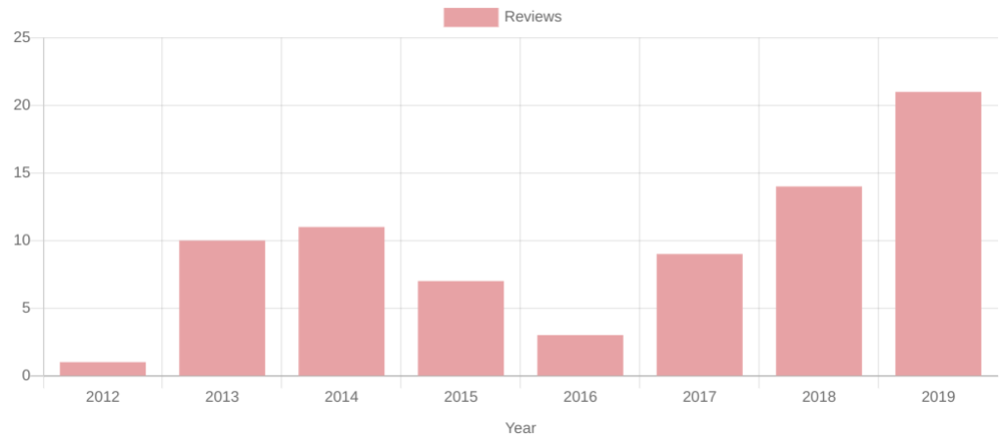

While I (generally) steadily increase in review articles per year, it becomes more critical to review effectively.

So I put this out there, that going forward, my goal is to review journal articles within a week* of accepting them. For anyone even partially observant I am diligent in including an asterisk after this statement. Naturally, this is for a good reason; a 20+ page article isn’t sensible to keep to this goal or if I’m very aware that I can not complete due to other commitments, e.g. travel. However, within a week of office time, the review will be complete.

There is a second part to this that is saying “No”. If I’m accepting articles I’m not excited about then this goal will be significantly hard to achieve. Therefore, hand-in-hand, I will be more proactive in saying no to articles that don’t interest me.

As I consider it essential to evaluate any objective; therefore, at the end of 2019, I will review this progress. Which gives me a wonderful excuse to create graphs of my progress (which all academics enjoy), but I will have to put some thought into what to plot.

So Journals! Let’s see what you have install for September-December of 2019.

18 Apr 2018

.

research

.

Organising VisArt @ ECCV'18!

Comments

This year I’m very excited to be organising the workshop VISART IV with several other great chairs:

- Alessio Del Bue, Istituto Italiano di Tecnologia (IIT);

- Leonardo Impett, EPFL & Biblioteca Hertziana, Max Planck for Art History;

- Peter Hall, University of Bath;

- Joao Paulo Costeira, ISR, Instituto Superior Técnico;

- Peter Bell, Friedrichs-Alexander University Nüremberg.

We hope that this year pushes harder the collaboration between Computer Vision, Digital Humanities and Art History. With aims to generate some fantastic new partnerships to be published at this workshop and future ones.

The further bonding is exemplified by the new track to allow DH and Art History to join the conversation about what they are doing with Computer Vision and how we can help them in the future. I’m very excited to see what gets submitted.

So here is the Call for Papers, enjoy!

VISART IV “Where Computer Vision Meets Art” Pre Announcement

4th Workshop on Computer VISion for ART Analysis In conjunction with the 2018 European Conference on Computer Vision (ECCV), Cultural Center (Kulturzentrum Gasteig), Munich, Germany

IMPORTANT DATES Full & Extended Abstract Paper Submission: July 9th 2018 Notification of Acceptance: August 3rd 2018 Camera-Ready Paper Due: September 21st 2018 Workshop: 9th September 2018

CALL FOR PAPERS

Following the success of the previous editions of the Workshop on Computer VISion for ART Analysis held in 2012, 2014 and 2016, we present the VISART IV workshop, in conjunction with the 2018 European Conference on Computer Vision (ECCV 2018). VISART will continue its role as a forum for the presentation, discussion and publication of computer vision techniques for the analysis of art. In contrast with prior editions, VISART IV will expand its remit, offering two tracks for submission:

- Computer Vision for Art - technical work (standard ECCV submission, 14 page excluding references)

- Uses and Reflection of Computer Vision for Art (Extended abstract, 4 page, excluding references)

The recent explosion in the digitisation of artworks highlights the concrete importance of application in the overlap between computer vision and art; such as the automatic indexing of databases of paintings and drawings, or automatic tools for the analysis of cultural heritage. Such an encounter, however, also opens the door both to a wider computational understanding of the image beyond photo-geometry, and to a deeper critical engagement with how images are mediated, understood or produced by computer vision techniques in the ‘Age of Image-Machines’ (T. J. Clark). Whereas submissions to our first track should primarily consist of technical papers, our second track therefore encourages critical essays or extended abstracts from art historians, artists, cultural historians, media theorists and computer scientists.

The purpose of this workshop is to bring together leading researchers in the fields of computer vision and the digital humanities with art and cultural historians and artists, to promote interdisciplinary collaborations, and to expose the hybrid community to cutting-edge techniques and open problems on both sides of this fascinating area of study.

This one-day workshop in conjunction with ECCV 2018, calls for high-quality, previously unpublished, works related to Computer Vision and Cultural History. Submissions for both tracks should conform to the ECCV 2018 proceedings style. Papers must be submitted online through the CMT submission system at:

https://cmt3.research.microsoft.com/VISART2018/

and will be double-blind peer reviewed by at least three reviewers.

TOPICS include but are not limited to:

- Art History and Computer Vision

- 3D reconstruction from visual art or historical sites

- Artistic style transfer from artworks to images and 3D scans

- 2D and 3D human pose estimation in art

- Image and visual representation in art

- Computer Vision for cultural heritage applications

- Authentication Forensics and dating

- Big-data analysis of art

- Media content analysis and search

- Visual Question & Answering (VQA) or Captioning for Art

- Visual human-machine interaction for Cultural Heritage

- Multimedia databases and digital libraries for artistic and art-historical research

- Interactive 3D media and immersive AR/VR environments for Cultural Heritage

- Digital recognition, analysis or augmentation of historical maps

- Security and legal issues in the digital presentation and distribution of cultural information

- Surveillance and behaviour analysis in Galleries, Libraries, Archives and Museums

INVITED SPEAKERS

- Peter Bell (Professor of Digital Humanities - Art History, Friedrich- Alexander University Nüremberg)

- Bjorn Ommer (Professor of Computer Vision, Heidelberg)

- Eva-Maria Seng (Chair of Tangible and Intangible Heritage, Faculty of Cultural Studies, University of Paderborn)

- More speakers TBC

PROGRAM COMMITTEE

To be confirmed.

ORGANIZERS: Alessio Del Bue, Istituto Italiano di Tecnologia (IIT) Leonardo Impett, EPFL & Biblioteca Hertziana, Max Planck for Art History Stuart James, Istituto Italiano di Tecnologia (IIT) Peter Hall, University of Bath Joao Paulo Costeira, ISR, Instituto Superior Técnico Peter Bell, Friedrichs-Alexander University Nüremberg