20 Oct 2014

.

tech

.

Why I am a resolution junky

Comments

I have been coding for many years now (scarily > 15 years), I have always aimed to get higher and higher resolution screens or alternatively multiple screens. Sadly as mentioned in an earlier blog post the computer I am using at the moment is a desktop replacement laptop with a dying screen so I purchased a 27" 2560x1440, not quite my laptops 3200x1800 but still pretty reasonable and great for late night coding! The above is an example of me geeking out, library coding and demo/test rig coding in parallel

26 Sep 2014

.

tech

.

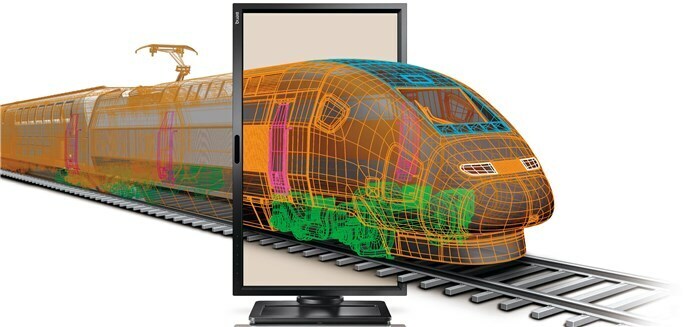

New Toys Sept’14 Part 1 of 2 – BenQ BL2710PT 27” WQHD

Comments

After having my beast of a desktop replacement laptop (ASUS n73sv) for a few years now the screen has become a bit temperamental. This and the fact I am using Lenovo Yoga2 Pro ultra book more and more (due to portability/screen res) has resulted in a void in powerful home processing.

Therefore I felt to solve this problem I would get a beefy screen to allow me to work more comfortably at home and in theory write more of my thesis (instead of this blog… shh). I wanted something above the 1920x1080 resolution, since I know that two screens isn’t an option used in conjunction with the old ASUS. Two competitors came to the fore the BenQ BL2710PT and a Dell, oddly the Dell had very bad reversed when used over HDMI and you have to do a lot of hard work to get over the locked down 1080p. So I opted for the BenQ and am very happy with the results.

The BenQ BL2710PT 27”

No one would ever say this is a beautiful monitor, but they would say it is very functional. It does what it needs and has wonderful colour and good true-blacks. Although my graphics card wasn’t over the moon about running at 1440 after a little convincing was happy to run 1440 @ 55Hz (don’t ask why not 60, I couldn’t coax it into getting up to that). I read some reviews of the equivalent Dell that you can only get 30Hz over HDMI with some converters and that it is fine if you increase the mouse rate, that is complete rubbish it feels laggy at 30Hz for a production machine it is simply not good enough.

The one criticism I have is the inbuilt speakers, they are pretty terrible (not that I have had much good experience with in screen-speakers). You have to remember this is marketed as a CAD monitor so you wouldn’t expect this to be a high interest feature. The addition of 2 USB3 ports on this side of the screen is a nice touch very useful extending out your storage options.

Some kind of conclusion

It is hard to have a decisive exciting conclusion from a screen, it does what it is supposed to. Would I opt for two monitors over this definitely, if you don’t have that as an option or you want to watch movies in bed then this is a great screen for you to get that extra screen estate. The only real hurdle to this screen is price, at £400 it is steep especially when you think a 1080 screen will set you back £150, a couple of those comes in a lot cheaper for more screen real-estate.

I do know there are some entry level UltraHD( 3840x2160 ) screens often these are locked to 30Hz. Ignoring that issue, I simply didn’t need it my GPU was being pushed to max-resolution poor little GeForce 560m so I opted to save the money and probably get a better UHD screen later when I get something to power it.

07 Jul 2014

.

research

.

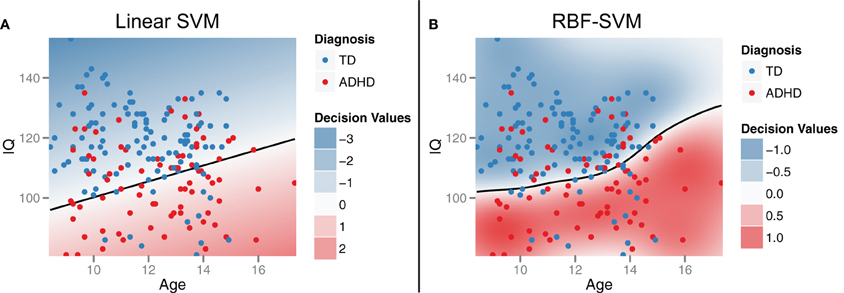

libSVM linear kernel normalisation

Comments

I have used a variety of tools for binary, multiclass and even incremental SVM problems, today I found something quite nice in binary case for libSVM, although potentially a source of confusion.

It is common in machine learning to apply a sigmoid function to normalise the boundaries of a problem, this can by empirically defining the upper and lower bound or through experimentation. Within libSVM they do this through experimentation, that is great to save you some time. The only thing to remember is it means through the use of random and cross validation with small sets of data, you are likely to get different results on each run.

So the function to consider is this:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

// Cross-validation decision values for probability estimates

static void svm_binary_svc_probability(

const svm_problem *prob, const svm_parameter *param,

double Cp, double Cn, double& probA, double& probB)

{

int i;

int nr_fold = 5;

int *perm = Malloc(int,prob->l);

double *dec_values = Malloc(double,prob->l);

// random shuffle

for(i=0;i<prob->l;i++) perm[i]=i;

for(i=0;i<prob->l;i++)

{

int j = i+rand()%(prob->l-i);

swap(perm[i],perm[j]);

}

for(i=0;i<nr_fold;i++)

{

int begin = i*prob->l/nr_fold;

int end = (i+1)*prob->l/nr_fold;

int j,k;

struct svm_problem subprob;

subprob.l = prob->l-(end-begin);

subprob.x = Malloc(struct svm_node*,subprob.l);

subprob.y = Malloc(double,subprob.l);

k=0;

for(j=0;j<begin;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

for(j=end;j<prob->l;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

int p_count=0,n_count=0;

for(j=0;j<k;j++)

if(subprob.y[j]>0)

p_count++;

else

n_count++;

if(p_count==0 && n_count==0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 0;

else if(p_count > 0 && n_count == 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 1;

else if(p_count == 0 && n_count > 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = -1;

else

{

svm_parameter subparam = *param;

subparam.probability=0;

subparam.C=1.0;

subparam.nr_weight=2;

subparam.weight_label = Malloc(int,2);

subparam.weight = Malloc(double,2);

subparam.weight_label[0]=+1;

subparam.weight_label[1]=-1;

subparam.weight[0]=Cp;

subparam.weight[1]=Cn;

struct svm_model *submodel = svm_train(&subprob,&subparam);

for(j=begin;j<end;j++)

{

svm_predict_values(submodel,prob->x[perm[j]],&(dec_values[perm[j]]));

// ensure +1 -1 order; reason not using CV subroutine

dec_values[perm[j]] *= submodel->label[0];

}

svm_free_and_destroy_model(&submodel);

svm_destroy_param(&subparam);

}

free(subprob.x);

free(subprob.y);

}

sigmoid_train(prob->l,dec_values,prob->y,probA,probB);

free(dec_values);

free(perm);

}

So if you have few numbers of samples, that is the case in some circumstances then the cross validation is where you hit problems. Of course you can simply re-implement it yourself or you can add a few lines to stop cross validation if the number of samples is too few.<p>

</p><p>Not the most elegant of code, but for the moment it will do. I choose to completely seperate the two steps as opposed to multiple if’s</p><pre class="brush: c++; toolbar: false">static void svm_binary_svc_probability(

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

const svm_problem *prob, const svm_parameter *param,

double Cp, double Cn, double& probA, double& probB)

{

int i;

int nr_fold = 5;

int *perm = Malloc(int,prob->l);

double *dec_values = Malloc(double,prob->l);

// random shuffle

for(i=0;i<prob->l;i++) perm[i]=i;

for(i=0;i<prob->l;i++)

{

int j = i+rand()%(prob->l-i);

swap(perm[i],perm[j]);

}

if (prob->l < (5*nr_fold)){

int begin = 0;

int end = prob->l;

int j,k;

struct svm_problem subprob;

subprob.l = prob->l;

subprob.x = Malloc(struct svm_node*,subprob.l);

subprob.y = Malloc(double,subprob.l);

k=0;

for(j=0;j<prob->l;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

int p_count=0,n_count=0;

for(j=0;j<k;j++)

if(prob->y[j]>0)

p_count++;

else

n_count++;

if(p_count==0 && n_count==0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 0;

else if(p_count > 0 && n_count == 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 1;

else if(p_count == 0 && n_count > 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = -1;

else

{

svm_parameter subparam = *param;

subparam.probability=0;

subparam.C=1.0;

subparam.nr_weight=2;

subparam.weight_label = Malloc(int,2);

subparam.weight = Malloc(double,2);

subparam.weight_label[0]=+1;

subparam.weight_label[1]=-1;

subparam.weight[0]=Cp;

subparam.weight[1]=Cn;

struct svm_model *submodel = svm_train(&subprob,&subparam);

for(j=begin;j<end;j++)

{

svm_predict_values(submodel,prob->x[perm[j]],&(dec_values[perm[j]]));

// ensure +1 -1 order; reason not using CV subroutine

dec_values[perm[j]] *= submodel->label[0];

}

svm_free_and_destroy_model(&submodel);

svm_destroy_param(&subparam);

}

free(subprob.x);

free(subprob.y);

}else{

for(i=0;i<nr_fold;i++)

{

int begin = i*prob->l/nr_fold;

int end = (i+1)*prob->l/nr_fold;

if (nr_fold == 1){

begin = 0 ;

end = prob->l;

}

int j,k;

struct svm_problem subprob;

subprob.l = prob->l-(end-begin);

subprob.x = Malloc(struct svm_node*,subprob.l);

subprob.y = Malloc(double,subprob.l);

k=0;

for(j=0;j<begin;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

for(j=end;j<prob->l;j++)

{

subprob.x[k] = prob->x[perm[j]];

subprob.y[k] = prob->y[perm[j]];

++k;

}

int p_count=0,n_count=0;

for(j=0;j<k;j++)

if(subprob.y[j]>0)

p_count++;

else

n_count++;

if(p_count==0 && n_count==0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 0;

else if(p_count > 0 && n_count == 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = 1;

else if(p_count == 0 && n_count > 0)

for(j=begin;j<end;j++)

dec_values[perm[j]] = -1;

else

{

svm_parameter subparam = *param;

subparam.probability=0;

subparam.C=1.0;

subparam.nr_weight=2;

subparam.weight_label = Malloc(int,2);

subparam.weight = Malloc(double,2);

subparam.weight_label[0]=+1;

subparam.weight_label[1]=-1;

subparam.weight[0]=Cp;

subparam.weight[1]=Cn;

struct svm_model *submodel = svm_train(&subprob,&subparam);

for(j=begin;j<end;j++)

{

svm_predict_values(submodel,prob->x[perm[j]],&(dec_values[perm[j]]));

// ensure +1 -1 order; reason not using CV subroutine

dec_values[perm[j]] *= submodel->label[0];

}

svm_free_and_destroy_model(&submodel);

svm_destroy_param(&subparam);

}

free(subprob.x);

free(subprob.y);

}

}

sigmoid_train(prob->l,dec_values,prob->y,probA,probB);

free(dec_values);

free(perm);

}

As with a lot of my code based posts, this is more for my memory than anything, but hopefully may help people unlock the secrets of libSVM.

24 Jun 2014

.

tech

.

Wolfram Programming Cloud Beta goes live

Comments

Wolfram Alpha is incredibly useful source of information, when it was announced they would produce a flexible programming cloud it was of great interest to me. With the release I jumped on to see what it was like.

So I played around with a few examples under their free account to see what was possible, then after 5 minutes I thought I would try to put a mini demo up for this blog post. The functionality is quite powerful exploiting rich social media structures, looks really impressive and something I would be interested in exploiting, but as soon as I tried to do something simple I got this:

<img class="img-responsive" style="max-width: 100%;, height: auto; display: block;"title="image" alt="image" src="http://stuartjames.info/Data/Sites/5/media/wlw/image4_thumb.png">

Where I would draw your attention to:

So well the free account is useless… better luck next time Wolfram you didn’t get me addicted to this!